Usability Inspection

The purpose of this lab is to learn the technique of evaluating interactive system design through expert analysis where experts give their feedback directly, based on their expertise or a set of design heuristics.

After completing this lab, students will be able to apply this technique to evaluate interactive system design. They will have a good insight about:

- How to evaluate design with What are the different phases involved?

- When to get design critique from

- Benefits and drawbacks of evaluation with experts

Introduction

In this lab, we are going to apply an evaluation technique called heuristic evaluation to evaluate the design of an interactive system. Heuristic Evaluation was created by Jakob Nielsen and colleagues (Section 9.3.2, Human–Computer Interaction, Third Edition, Alan Dix et al), about twenty years ago. The basic idea of heuristic evaluation is to provide a set of people — often other stakeholders on the design team or outside design experts — with a set of design heuristics or principles, and ask them to use those to look for problems in our design. Each of them independently walks through a variety of tasks using our design to look for the bugs. Doing independently is important since different evaluators are going to find different problems. At the end of the process, they’re going to get back together and talk about what they found. This “independent first, gather afterwards” is how we get a “wisdom of crowds” benefit in having multiple evaluators. One of the reasons that we’re talking about this early in the lab is that it is a technique that you can use, either on a working user interface or on sketches of user interfaces. So, heuristic evaluation works really well in conjunction with paper prototypes and other rapid, low fidelity techniques enabling us to get our design ideas out quick and fast.

When to evaluate with experts

We can get peer critique really at any stage of your design process, but let’s highlight few that can be particularly valuable.

- First, it’s really valuable to get peer critique before user testing, because that helps you not waste your users on stuff that’s just going to get picked up

- The rich qualitative feedback that peer critique provides can also be really valuable before redesigning your application, because what it can do is it can show you what parts of your app you probably want to keep, and what are other parts that are more problematic and deserve redesign.

- Third, sometimes, you know there are problems, and you need data to be able to convince other stakeholders to make the changes. And peer critique can be a great way, especially if it’s structured, to be able to get the feedback that you need, to make the changes that you know need to

- And lastly, this kind of structured peer critique can be really valuable before releasing software, because it helps you do a final sanding of the entire design, and smooth out any rough edges.

Benefits and drawbacks of evaluation with experts

Heuristic evaluation can often be a lot faster. It takes just an hour or two for an evaluator. In heuristic evaluation, the results come pre-interpreted because your evaluators are directly providing you with problems and things to fix. Now conversely, experts walking through your system can generate false positives that wouldn’t actually happen in a real environment.

To explain how the evaluation takes place, let us consider NTS’s website (www.nts.org.pk) and apply this technique to evaluate it.

Activity 1: First evaluation phase

The first evaluation generally takes up to couple of hours, depending on the nature and complexity of the software. The evaluators use the software freely to gain a feel for the methods of interaction and the scope. It is also important to get all of your evaluators up to speed, during this phase, on what the story is behind your software — any necessary domain knowledge they might need — and tell them about the scenario that you’re going to have them step through.

During this activity, we are going to walk through the NTS website just to get used to its flow, to get feel of it, and to get the idea of features it offers.

Activity 2: Second evaluation phase

In the second evaluation phase, the evaluators independently carry out another run- through, whilst applying the chosen heuristics. Now which heuristics should we use? Nielsen’s ten heuristics are a fantastic start, and we can augment those with anything else that’s relevant for our domain. The evaluators would focus on individual elements, look for the heuristics violations and document them. Each documented violation must include the issue, its severity rating, the heuristic that it violates, and a description of exactly what the problem is. Given below is the severity rating system Jakob Nielson created and we may use the same.

0 – don’t agree that this is a usability problem 1 – cosmetic problem

- – minor usability problem

- – major usability problem; important to fix 4 – usability catastrophe; imperative to fix

Violation 1

Issue: Unable to figure out “How did I get here?” and “How do I get back to where I came from?

Severity: 3

Heuristic violated: Show system status, User control and freedom

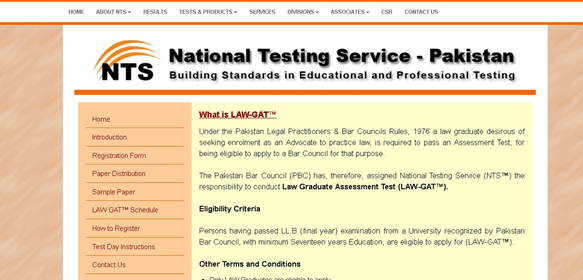

Description: Figure 3.1 shows one of the pages visited on the website. The system provides no clue to user to figure out his/her way through the site, and to find the way back if he/she accidentally clicks on the wrong link. There are no breadcrumbs which offer freedom to users to “jump” to previous categories in the sequence without using the Back key, other navigation bars, or the search engine. There is no highlighting of top menu item to make user aware the menu category current page belongs to.

Figure 3.1: Information Page about Law Practitioners Test on NTS Website

Violation 2

Issue: Inconsistent color scheme

Severity: 1

Heuristic violated: Standard and consistency

Description: Figure 3.2 shows two different pages visited on the website. Both follows different color scheme leading to inconsistent design.

Violation 3

Issue: NTS logo is not clickable on many pages.

Severity: 2

Heuristic violated: Standard and consistency, Flexibility and efficiency

Description: The NTS logo, though clickable when on home page (www.nts.org.pk), is not clickable on many pages.

Figure 3.2: Screenshot of Two Different Pages of NTS Website

Activity 3: Aggregation

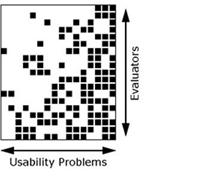

Once every evaluator has performed the evaluation individually and independently, next step is to get together, aggregate the findings and compile a report, of all distinct usability issues, that can be presented and discussed with the design team. Since multiple evaluators may find same problems, so rather than presenting a redundant set of identified problems, it would be better to include them once in the report. As a designer, we should ask evaluators to include in this report is the Issues-Evaluators matrix (Figure-3), where rows represent evaluators and columns represent the issues identified. This matrix would help designers in differentiating between a very good evaluator and a not very good evaluator

Figure 3.2: Issues-Evaluators Matrix

Activity 4: Debriefing session with the design team

Finally, after all evaluators have gone through the interface, listed their problems, and combined them in terms of the severity and importance, next step is to debrief with the design team. This is a nice chance to be able to discuss issues in the user interface and qualitative feedback, and suggest improvements on how these problems can be addressed. In this debrief session, it can be valuable for the development team to estimate the amount of effort that it would take to fix one of these problems. For example, voiolation1 in activity 3 is easy to fix. Conversely, violation 1 is major usability issue which takes a more effort, but its importance will lead you to fix it. There could be other things where the importance relative to the cost involved but just don’t make sense to deal with right now. This debrief session can be a great way to brainstorm future design ideas, as we’ve got all the stakeholders in the room, and while the ideas, about what the issues are with the user interface, are fresh in their minds.

Home Activities

The student should work in a group of 3-5 for the following activities. Activity 2 and Activity 3 must be performed individually while Activity 1 and Activity 4 in group.

Activity 1: Choice of System to be Evaluated

The application, system, or device should be similar, or at least related in some sense, to the topic your group selected as Final Year Project. This will help in identifying design issues a similar system possesses and lead you to design better product compared to the competitor.

Activity 2: First evaluation phase (Individually and independently)

During this activity, each group member will walk through the NTS website just to get used to its flow, to get feel of it, and to get the idea of features it offers.

Activity 3: Second evaluation phase (Individually and independently)

During this activity, each group member will use Jakob Nielson’s ten design heuristics and look for the heuristics violations and document them. Each documented violation must

include the issue, its severity rating, the heuristic that it violates, and a description of exactly what the problem is.

Activity 4: Aggregation (In group)

For this activity, all the group member will get together, aggregate the findings and compile a report that would contain all distinct usability issues and the Issues-Evaluators matrix.

Assignment Deliverables

Students need to submit a report containing following items:

- Small description of the chosen system

- Issues identified, by each of the group members. Each documented issue must highlight:

- Issue

- Severity

- Heuristics violated

- Description (may include screenshots for better understanding)

- List of all the unique issues found and Issues-Evaluators Matrix, where rows represent evaluators and columns represent the usability